The First Workshop on Commonsense Reasoning and Knowledge Bases (CSKB) at AKBC 2021

Recent advances in large pre-trained language models have shown that machines can directly learn large quantities of commonsense knowledge through self-supervised learning on raw text. However, they still fall short of human-like understanding capabilities: they make inconsistent predictions, learn to exploit spurious patterns, and fail to robustly apply learned knowledge to downstream applications. Consequently, the development and integration of unpaired, outside knowledge representation sources remains critically important to provide machine commonsense engines with scaffolding to learn structured reasoning. We organize this workshop to encourage discussion of current progress on building machines with commonsense knowledge and reasoning abilities, with special focus on commonsense knowledge bases (CSKBs). The workshop will be collocated with AKBC 2021.

Latest News

-

Oct 24, 2021 The videos of invited talks, panel discussion, and accepted papers are available here.

-

Oct 1, 2021 The program and the accepted papers are updated.

-

Spet 14, 2021 Online registration of AKBC 2021 is now open! Please register here.

-

Aug 5, 2021. The submission deadline is extended to August 20, 2021.

-

July 6, 2021. The first version of our call for papers is out.

Registration

Please register AKBC 2021 via this link and find CSKB workshop in Day 2 Workshop options. There is no separate fee for workshop registration, the conference registration ($50 for non-students, $30 for students) will include all workshops.

Important Dates

- All paper submissions due –

August 5August 20, 2021 - Notification of acceptance –

Sept 10Sep 15, 2021 - Camera-ready papers due –

September 30Oct 5, 2021 - Workshop –

Oct 5Oct 8, 2021

All deadlines are 11.59 pm UTC -12h (“anywhere on Earth”).

Program

The workshop will be held over Zoom. Get the Zoom link from the AKBC workshops page.

| Start (PST) | End (PST) | Session |

|---|---|---|

| 08:00 | 08:05 | Opening remarks (Video) |

| 08:05 | 08:40 | Invited Speaker # 1 - Rachel Rudinger When Pigs Fly and Birds Don’t: Exploring Defeasible Inference in Natural Language (Video) |

| 08:40 | 09:00 | 2-minute lightning talks Paper ids: [2, 3, 4, 6, 7, 8, 14, 15, 17] (videos are linked below) |

| 09:00 | 09:35 | Invited Speaker # 2 - Maarten Sap Positive AI with Social Commonsense Models (Video) |

| 09:35 | 10:05 | Break |

| 10:05 | 10:40 | Invited Speaker # 3 - Sara Hooker What can compression tell us about generalization? (Video) |

| 10:40 | 11:00 | 2-minute lightning talks Paper ids: [1, 5, 9, 10, 11, 12, 13, 16, 18] (videos are linked below) |

| 11:00 | 11:55 | Panel Discussion (Video) |

| 11:55 | 12:20 | Break |

| 12:20 | 12:55 | Invited Speaker # 4 - Xiang Ren Commonsense Reasoning in the Wild (Video) |

| 12:55 | 13:00 | Closing Statements (Video) |

Invited Talks and Panel Discussion

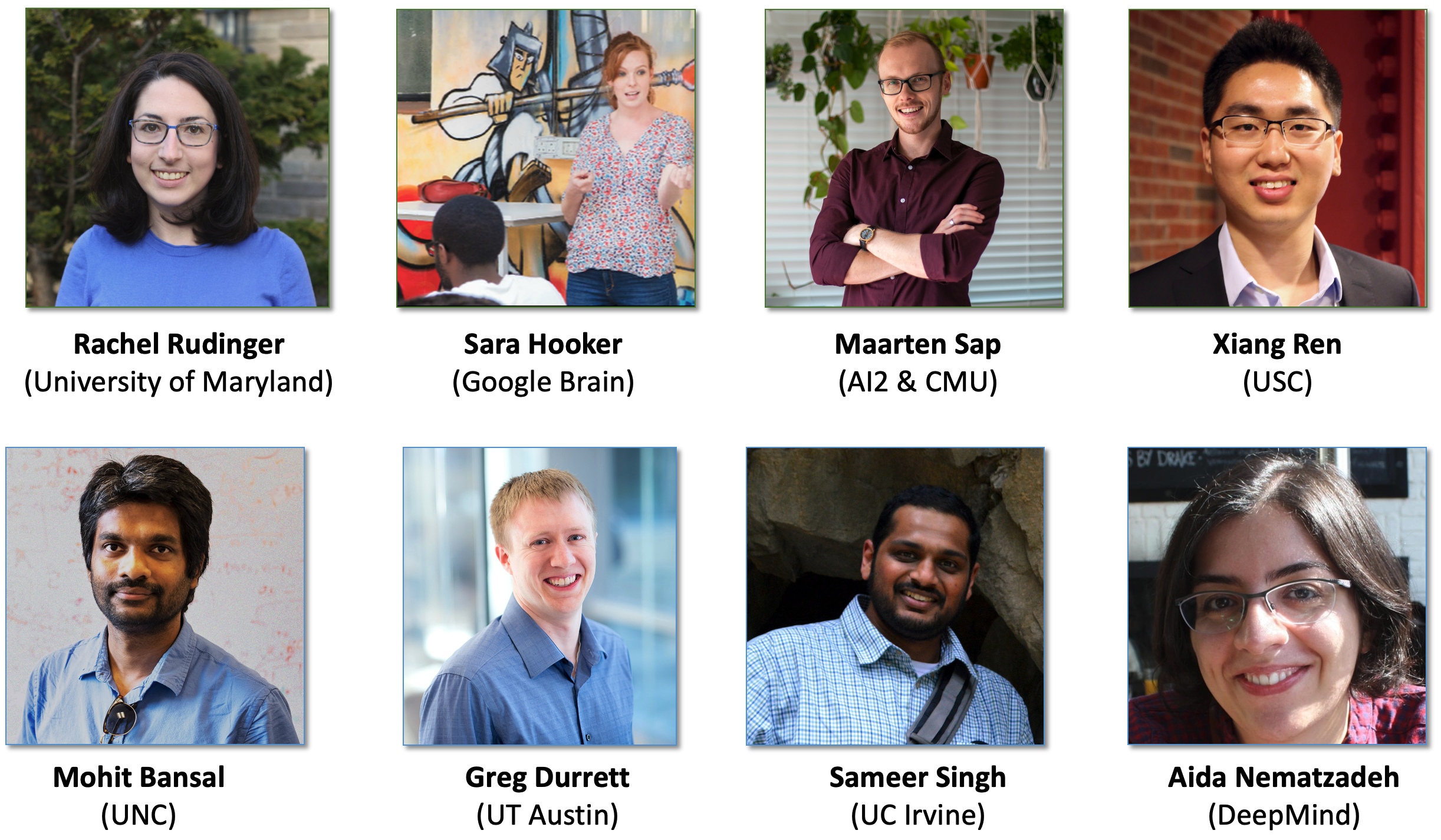

Speakers

We’re excited to have the following keynote speakers at CSKB 2021:

Rachel Rudinger, University of Maryland.

When Pigs Fly and Birds Don’t: Exploring Defeasible Inference in Natural Language

| Homepage: https://rudinger.github.io/ |

| Abstract: Commonsense reasoning tasks are often posed in terms of soft inferences: given a textual description of a scenario, determine which inferences are likely or plausibly true. For example, if a person drops a glass, it is likely to shatter when it hits the ground. A hallmark of such inferences is that they are defeasible, meaning they may be undermined or retracted with the introduction of new information. (E.g., we no longer infer that the dropped glass is likely to have shattered upon learning that it landed on a soft pile of laundry.) While defeasible reasoning is a long-standing topic of research in Artificial Intelligence (McCarthy, 1980; McDermott and Doyle, 1980; Reiter, 1980), it is less well studied in the context of contemporary text-based inference tasks, like Recognizing Textual Entailment (Dagan et al., 2005), or Natural Language Inference (MacCartney, 2009; Bowman et al., 2015). In this talk, I will present a new line of work that merges traditional defeasible reasoning with contemporary data-driven textual inference tasks. I argue that defeasible inference is a broadly applicable framework for different types of language inference tasks, and present examples for physical, temporal, and social reasoning. |

| Bio: Rachel Rudinger is an Assistant Professor of Computer Science at the University of Maryland, College Park. Her research focuses on acquisition of commonsense knowledge through language and the concomitant issue of social biases and stereotyping in NLP technology. She earned her Ph.D. in Computer Science from Johns Hopkins University in 2019, where she was a founding member of the Decompositional Semantics Initiative. From 2019-2020, she was a Young Investigator at the Allen Institute for AI in Seattle. |

Sara Hooker, Google Brain.

What can compression tell us about generalization?

| Homepage: https://www.sarahooker.me/ |

| Abstract: TBD |

| Bio: Sara Hooker is a research scientist at Google Brain doing deep learning research on training models beyond test-set accuracy to fulfill multiple criteria. Her main research interests gravitate towards interpretability, model compression and security as well as the impact of design choices like hardware and randomness on generalization behavior. She is one of the founding organizers of the Trustworthy ML Initiative. In 2014, she founded Delta Analytics, a non-profit dedicated to bringing technical capacity to help non-profits across the world use machine learning for good. |

Maarten Sap, Allen Institute for AI (AI2) & CMU.

Positive AI with Social Commonsense Models

| Homepage: https://homes.cs.washington.edu/~msap/ |

| Abstract: To effectively understand language and safely communicate with humans, machines must not only grasp the surface meanings of texts, but also their underlying social meaning. This requires understanding interpersonal social commonsense, such as knowing to thank someone for giving you a present, as well as understanding harmful social biases and stereotypes. Failure to account for these social and power dynamics could cause models to produce redundant, rude, or even harmful outputs. In this talk, I will describe my research on enabling machines to reason about social dynamics and social biases in text. I will first discuss ATOMIC, the first large-scale knowledge graph of social and interpersonal commonsense knowledge, with which machines can be taught to reason about the causes and effects of everyday events. Then, I will show how we can make machines understand and detect social biases in language, using Social Bias Frames, a new structured formalism for distilling biased implications of language. I will conclude with future research directions on making NLP systems more socially-aware and equitable, and how to use language technologies for positive societal impact. |

| Bio: Maarten Sap is a postdoc/young investigator at the Allen Institute for AI (AI2) on project MOSAIC, and will join CMU's LTI department as an assistant professor in Fall 2022. His research focuses on making NLP systems socially intelligent, and understanding social inequality and bias in language. He has presented his work in top-tier NLP and AI conferences, receiving a best short paper nomination at ACL 2019 and a best paper award at the WeCNLP 2020 summit. Additionally, he and his team won the inaugural 2017 Amazon Alexa Prize, a social chatbot competition. He received his PhD from the University of Washington's Paul G. Allen School of Computer Science & Engineering where he was advised by Yejin Choi and Noah Smith. In the past, he has interned at the Allen Institute for AI working on social commonsense reasoning, and at Microsoft Research working on deep learning models for understanding human cognition. |

Xiang Ren, University of Southern California.

Commonsense Reasoning in the Wild

| Homepage: http://ink-ron.usc.edu/xiangren/ |

| Abstract: Current NLP systems can answer commonsense questions or write fluent stories that produce impressive scores on benchmark datasets. However, most of the progress are evaluated using static, closed-domain datasets created for individual tasks. To deploy commonsense reasoning services in the wild, we need systems that can generate answers in an open-ended way, can perform robust logical reasoning, and can generalize across diverse task formats, domains, and datasets. In this talk I will share three pieces of work which introduce new formulation of commonsense reasoning challenges as well as novel evaluation protocols, to address the above issues. We look to encourage more efforts on proposing "dynamic", general-purpose commonsense reasoning challenges to evaluate the progress. |

| Bio: Xiang Ren is an assistant professor at the USC Computer Science Department, a Research Team Leader at USC ISI, and the PI of the Intelligence and Knowledge Discovery (INK) Lab at USC. Priorly, he spent time as a research scholar at the Stanford NLP group and received his Ph.D. in Computer Science from the University of Illinois Urbana-Champaign. Dr. Ren works on knowledge acquisition and reasoning in natural language processing, with focuses on developing human-centered and label-efficient computational methods for building trustworthy NLP systems. He received NSF CAREER Award, The Web Conference Best Paper runner-up, ACM SIGKDD Doctoral Dissertation Award, and several research awards from Google, Amazon, JP Morgan, Sony, and Snapchat. He was named Forbes' Asia 30 Under 30 in 2019. |

Panel Discussion

We will also be holding a panel discussion with the invited speakers as well as the following panelists:

- Mohit Bansal, University of North Carolina at Chapel Hill

- Greg Durrett, UT Austin

- Sameer Singh, UC Irvine

- Aida Nematzadeh, DeepMind

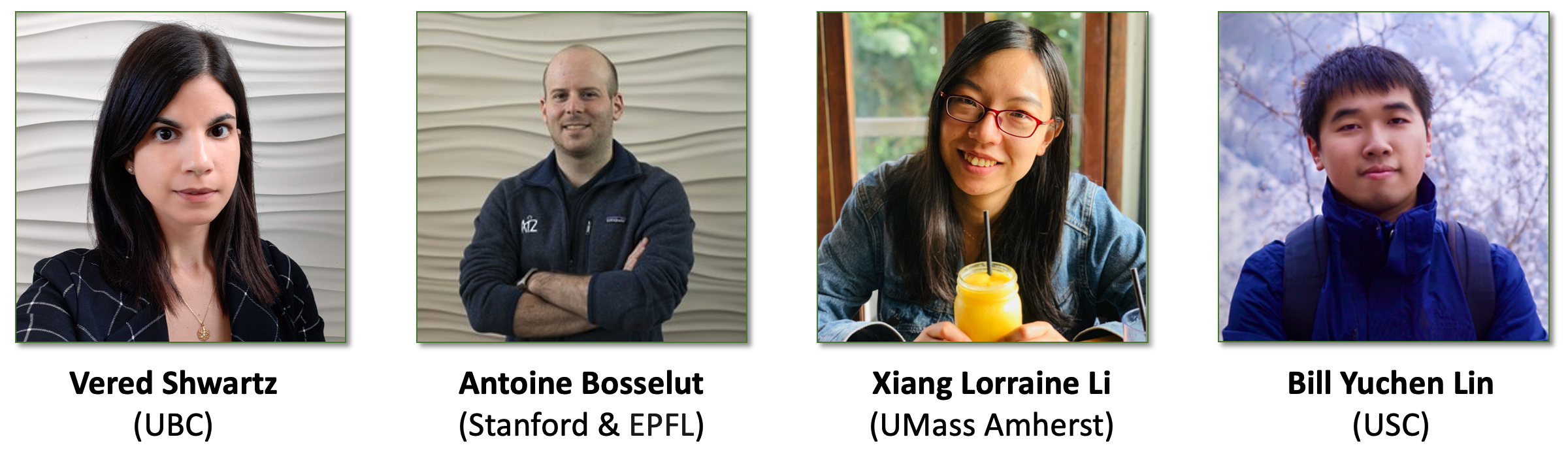

Organizers

Vered Shwartz, University of British Columbia.

| Vered Shwartz is a postdoctoral researcher at the Allen Institute for AI (AI2) and the Paul G. Allen School of Computer Science & Engineering at the University of Washington. She will join the Department of Computer Science at the University of British Columbia as an Assistant Professor in fall 2021. Her research interests include computational semantics and pragmatics, and commonsense reasoning. She co-organized the ACL 2018 Student Research Workshop, the SemEval 2018 shared task on hypernymy discovery, the AAAI 2020 Workshop on Reasoning for Complex Question Answering (Special Edition on Commonsense Reasoning), and the EMNLP 2020 workshop on Simple and Efficient NLP, and co-instructed the ACL 2020 tutorial on commonsense reasoning. |

Antoine Bosselut, Stanford University & EPFL.

| Antoine Bosselut is a postdoctoral researcher at Stanford University and at the Allen Institute for AI (AI2). He will join the School of Computer and Communication Sciences at EPFL as an Assistant Professor in Fall 2021. His research interests are in the intersection of knowledge representation, reasoning, and NLP. He organized the West Coast NLP (WeCNLP) from 2018 to 2020, the NeuralGen workshop at NAACL 2019, the GEM workshop at ACL 2021, and co-instructed the ACL 2020 tutorial on commonsense reasoning, the EMNLP 2020 tutorial on neural text generation, and the AAAI 2021 tutorial on commonsense reasoning. |

Xiang Lorraine Li, UMass Amherst.

| Xiang Lorraine Li is a Ph.D. student at UMass Amherst advised by Andrew McCallum. Previously, she completed her Master's from the University of Chicago while researching with Kevin Gimpel at TTIC. Her research interest mainly lies in learning efficient commonsense knowledge representations with specific build-in model biases (e.g., transitivity bias of Is-A relations) and examining commonsense knowledge in large language models. She serves as a program committee (reviewer) for multiple conferences and workshops, including AKBC, EMNLP, ACL, AAAI, etc. |

Bill Yuchen Lin, University of Southern California.

| Bill Yuchen Lin is a Ph.D. candidate at the University of Southern California, advised by Prof. Xiang Ren. His research goal is to teach machines to think, talk, and act with commonsense knowledge and commonsense reasoning ability like humans do. Towards this ultimate goal, he has been developing knowledge-aware reasoning methods (e.g., KagNet, MHGRN, DrFact) and constructing benchmark datasets (e.g., CommonGen and RiddleSense). He also initiated an online compendium of commonsense reasoning research, which serves as a portal site for the community. He has been serving as program committee members for ACL, EMNLP, NAACL, ICLR, NeurIPS, etc. |

Accepted Papers

Please note that all papers are non-archival. See all information on OpenReview.

Regular Workshop Papers

- [7] Artefact Retrieval: Overview of NLP Models with Knowledge Base Access (video)

- [3] Retrieve, Caption, Generate: Visual Grounding for Enhancing Commonsense in Text Generation Models (video)

- [18] Story Generation with Commonsense Knowledge Graphs and Axioms (video)

- [17] No Need to Know Everything! Efficiently Augmenting Language Models With External Knowledge (video)

- [15] COPA-SSE: Semi-Structured Explanations for Commonsense Reasoning (video)

- [14] CoSe-Co: Sentence Conditioned Generative CommonSense Contextualizer for Language Models (video)

Previously Published Papers (Extended Abstracts)

- [1] TellMeWhy: A Dataset for Answering Why-Questions in Narratives (video)

- [2] Perhaps PTLMs should go to School – A Task to Assess Open Book and Closed Book QA (video)

- [5] Modeling Worlds in Text (video)

- [16] Like hiking? You probably enjoy nature: Persona-grounded Dialog with Commonsense Expansions (video)

- [13] Do Language Models Perform Generalizable Commonsense Inference? (video)

- [12] Retrieval Enhanced Model for Commonsense Generation (video)

- [11] Prompting Contrastive Explanations for Commonsense Reasoning Tasks (video)

- [10] COM2SENSE: A Commonsense Reasoning Benchmark with Complementary Sentences (video)

- [9] Fusing Context Into Knowledge Graph for Commonsense Question Answering (video)

- [8] Explanations for CommonsenseQA: New Dataset and Models (video)

- [6] On Commonsense Cues in BERT for Solving Commonsense Tasks (video)

- [4] Could you give me a hint? Generating inference graphs for defeasible reasoning (video)

Call for papers

Topics of Interest

The topics of interest include, but are not limited to:

- Resources: acquiring commonsense knowledge (from text corpora, images, videos, pre-trained neural models, etc.); constructing and completing (semi-)structured CSKBs; consolidating CSKBs under unified schemas.

- Benchmarks: designing challenging tasks and building datasets to evaluate models’ commonsense knowledge and reasoning abilities; designing new evaluation schemas and metrics for commonsense reasoning tasks, particularly for open-ended and generative tasks.

- Methods: methods for commonsense reasoning tasks; methods that integrate CSKBs and neural models; methods that use CSKBs to improve the interpretability and explainability of neural models for commonsense reasoning and more.

- Analysis: methods to probe commonsense knowledge from NLP models; methods to understand reasoning mechanisms of existing methods; methods that identify limitations of existing methods for AI applications (including but not limited to NLP, CV and robotics) due to lack of commonsense knowledge.

Submission Guide

We solicit two categories of papers:

-

Workshop papers: describing new, previously unpublished research in this field. The submissions should follow the AKBC 2021 style guidelines. and contain up to 10 pages, excluding references and appendices (which should be put after references). Submissions will be subject to a single-blind review process (i.e. they need not be anonymized). Final versions of accepted papers will be allowed 1 additional page of content so that reviewer comments can be taken into account.

-

Published papers: papers on topics relevant to the workshop theme, previously published at NLP or ML conferences. These papers can be submitted in their original formats. Submissions will be reviewed for fit to the workshop topics.

In both categories, accepted papers will be published on the workshop website (note that the workshop is non-archival), and will be presented at the workshop either as a talk or a poster.

Submission site: https://openreview.net/group?id=AKBC.ws/2021/Workshop/CSKB

Program Committee

- Faeze Brahman, University of California, Santa Cruz

- Jack Hessel, Allen Institute for Artificial Intelligence

- Liwei Jiang, University of Washington

- Niket Tandon, Allen Institute for Artificial Intelligence

- Rajarshi Das, UMass

- Tuhin Chakrabarty, Columbia University

Contact

Please email to cskb-akbc21@googlegroups.com if you have any questions.